.svg)

Join the NEWSLETTER

Get the latest DevFest DC news in your inbox

Join our community for access to the latest DevFest DC events, resources, and exclusive promotions.

Thank you for subscribing!

Oops! Something went wrong while submitting the form.

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

9AM — 6PM | Aug 28, 2026

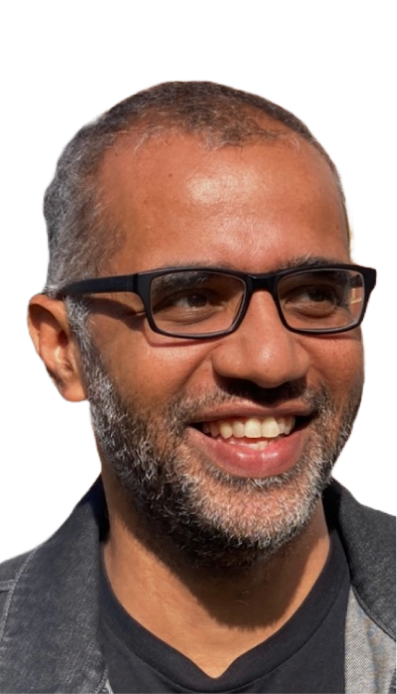

DevFestDC is an annual, community-led software engineering conference aimed at educating software developers, architects, entrepreneurs, quality focused individuals and tech leaders.

.avif)

.avif)

.svg)

Build the Future of AI

9AM — 6PM | Oct 3, 2025

9AM

Everyone

10am

Keynote Speakers

11am

Everyone

12pm

Everyone

1pm

Speakers

5pm

Everyone

.png)

.png)

.png)

Fuse CENTER at George Mason University

9AM — 6PM | August 28, 2026

.avif)

.png)